We propose an efficient encoder-decoder architecture for neural source separation, called spectral feature compression (SFC), which compresses the input using a single sequence modeling module, making it both input-adaptive and parameter-efficient.

Research Projects

A novel framework for pre-training environmental sound analysis models by utilizing parametrically synthesized acoustic signals using formula-driven methods.

Onset-and-Offset-Aware Sound Event Detection via Differentiable Frame-to-Event Mapping

2 mins

SED-HSMM couples a neural acoustic model with a hidden semi-Markov model to deliver event-wise sound detection with precise boundaries.

Neural FCASA for Conversation Analysis

2 mins

Neural blind source separation and diarization for distant speech recognition that works with weak supervision.

This paper revisits single-channel audio source separation based on a probabilistic generative model of a mixture signal defined in the continuous time domain.

Time-Varying Neural FCA

3 mins

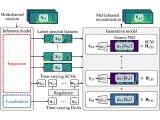

This paper presents an unsupervised multichannel method that can separate moving sound sources based on an amortized variational inference (AVI) of joint separation and localization.

Neural Full-Rank Spatial Covariance Analysis

2 mins

This paper describes a neural blind source separation (BSS) method based on amortized variational inference (AVI) of a non-linear generative model of mixture signals.

Speech Enhancement Based on Robust NTF

2 mins

This paper presents a blind multichannel speech enhancement method that can deal with the time-varying layout of microphones and sound sources.